- Blog

- Logitech quickcam orbit drivers

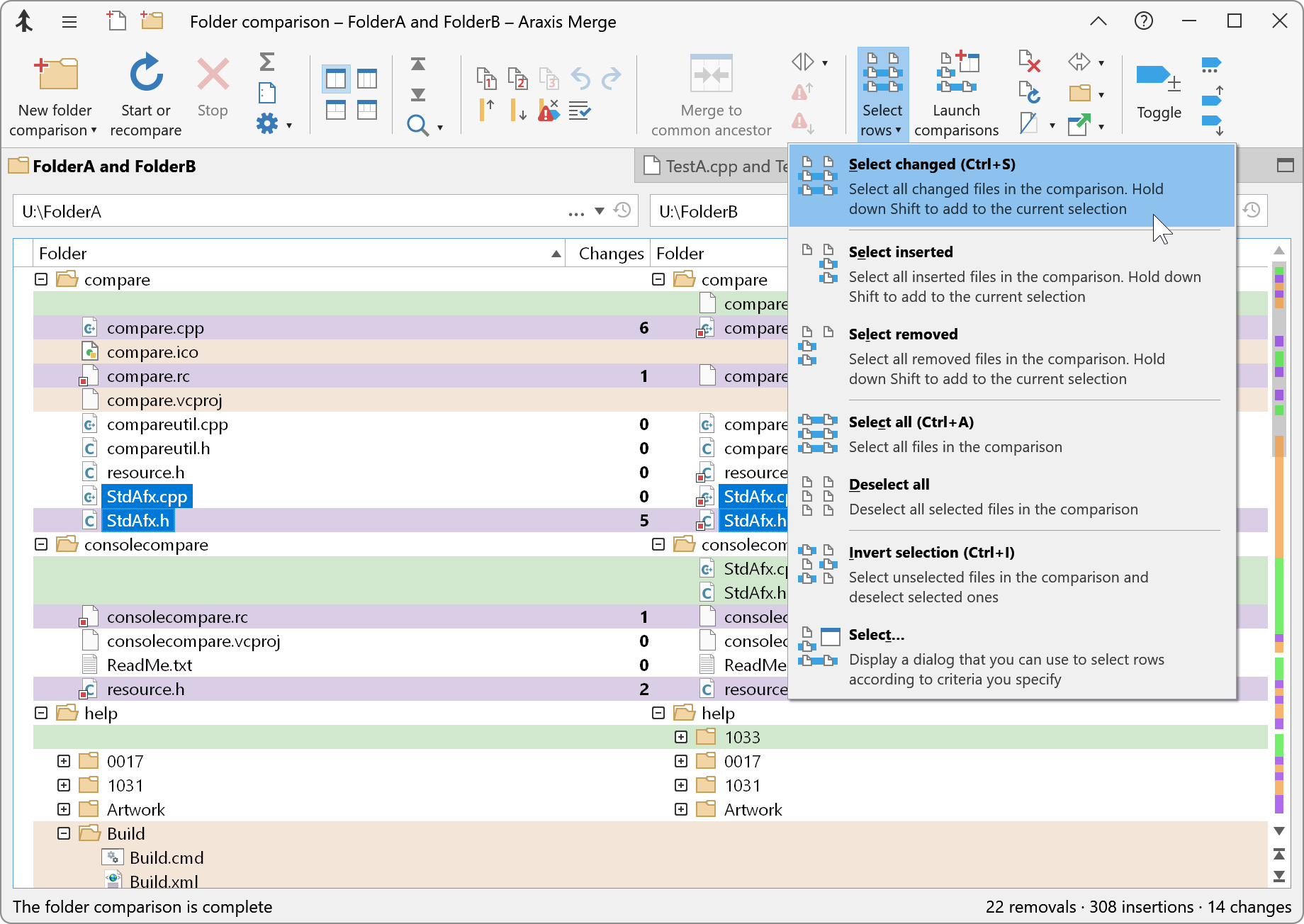

- Does araxis merge version 6-x work on windows 10-

- Nvidia shield controller wont turn on

- Microsoft sql server management studio 2014 download

- Xtools pro gis

- Autodesk bim 360

- Bee gees greatest hits album

- Aramaic bible in plain english free download

- Garrys mod gameplay

- Mobile intel gma 4500mhd driver

- Forgot unlock pattern

- Wii theme song similar to zelda shop

- Forge minecraft 1-11-2

- Citrix receiver 4-4 download

- Activator rail recipe

- X man mobile game free download

- 8 ball ruler 1-1 free download

- Serveurs cccam

- Autodesk inventor 3d print

- Da vinci code movie part 2

- Brenda all that nickelodeon

- Rpg_rt directdraw error

- Bricks n balls level 469

- Age of empires 3 for macintosh

- Youtube jason statham movies

- Scarlet heart ryeo eng sub dramacool

Number of rows after which size of the grouping keys/aggregation classes is performed. Whether there is skew in data to optimize group by queries. Whether to use map-side aggregation in Hive Group By queries. Default Value: true in Hive 0.3 and later false in Hive 0.2.Whether to combine small input files so that fewer mappers are spawned. Scratch space for Hive jobs when Hive runs in local mode. The permission for the user-specific scratch directories that get created in the root scratch directory. Default Value: /tmp/$.Īlso see and Configuration Properties#.These jars can be used just like the auxiliary classes in for creating UDFs or SerDes. They can be renewed (added, removed, or updated) by executing the Beeline reload command without having to restart HiveServer2. The locations of the plugin jars, which can be comma-separated folders or jars. The location of the plugin jars that contain implementations of user defined functions (UDFs) and SerDes. The location of hive_cli.jar that is used when submitting jobs in a separate jvm.

#Does araxis merge version 6.x work on windows 10? keygen

If the one specified in the configuration property Configuration Properties# is negative, Hive will use this as the maximum number of reducers when automatically determining the number of reducers. Maximum number of reducers that will be used. Default Value: 999 prior to Hive 0.14.0 1009 in Hive 0.14.0 and later.In Hive 0.14.0 and later the default is 256 MB, that is, if the input size is 1 GB then 4 reducers will be used. The default in Hive 0.14.0 and earlier is 1 GB, that is, if the input size is 10 GB then 10 reducers will be used. By setting this property to -1, Hive will automatically figure out what should be the number of reducers. Hadoop set this to 1 by default, whereas Hive uses -1 as its default value. Typically set to a prime close to the number of available hosts. The default number of reduce tasks per job. llap: utilize llap nodes during execution of tasks.Chooses whether query fragments will run in container or in llap.

See Hive on Tez and Hive on Spark for more information, and see the Tez section and the Spark section below for their configuration properties. It may be removed without further warning. While mr remains the default engine for historical reasons, it is itself a historical engine and is deprecated in the Hive 2 line ( HIVE-12300). Options are: mr (Map Reduce, default), tez ( Tez execution, for Hadoop 2 only), or spark ( Spark execution, for Hive 1.1.0 onward).